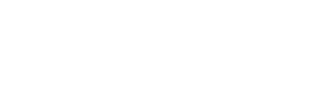

Recently, a disturbing message has been circulating across WhatsApp and other social media platforms. The message warns of well-dressed strangers approaching people in public places — malls, markets, and transport stations — asking for help operating their phone. According to the claim, the phone is secretly recording video, capturing your fingerprint, voice, and facial data. Within 30 minutes, scammers allegedly use artificial intelligence (AI) to clone your identity and take out loans in your name.

The message ends with urgent warnings: never help strangers with their phones, never read numbers aloud, and share the message to save others.

It sounds alarming. But how much of it is actually true?

Let’s examine the facts carefully.

Understanding the Viral Claim

The viral warning frames this as a new kind of “AI biometric identity scam.” It claims scammers are no longer interested in stealing your money directly — instead, they want your biometric data. According to the message, simply touching a stranger’s phone can capture your fingerprint, and speaking while looking at the screen allows AI to clone your voice and face.

The scenario suggests that within minutes, scammers can create a digital version of you and use it to access financial services, apply for loans, and leave you with debt.

The emotional tone is urgent and fear-driven, designed to make readers react quickly.

But cybersecurity must be based on facts, not fear.

What Is True: AI Voice and Face Cloning Exist

Artificial intelligence has advanced significantly in recent years. Today’s AI tools can:

- Clone a person’s voice using short audio samples

- Generate realistic face videos known as deepfakes

- Mimic facial expressions and speech patterns

These technologies are real and are already being used in entertainment, research, and unfortunately, sometimes in scams.

Digital impersonation is a genuine cybersecurity concern.

However, having the technology and successfully using it to commit financial fraud instantly are two very different things.

What Is Misleading: Fingerprint Theft by Touching a Phone

One of the most alarming claims in the viral message is that touching a stranger’s phone allows scammers to capture your fingerprint.

In reality, modern smartphones do not work this way.

Fingerprint authentication systems:

- Store encrypted fingerprint templates locally on the device

- Do not store or expose actual fingerprint images to apps

- Do not transmit fingerprint data through video calls or recordings

Simply touching someone’s phone cannot transfer your fingerprint into their system.

This part of the message is highly misleading and not supported by how biometric security works.

Can Scammers Clone You and Take Loans in 30 Minutes?

This is another major exaggeration.

Financial institutions typically use multiple layers of security, including:

- BVN (Bank Verification Number) or NIN verification in Nigeria

- One-Time Passwords (OTP) sent to your registered phone number

- Device authentication

- Linked bank account verification

- Multi-factor authentication

Even with advanced AI, face or voice data alone is usually not enough to open accounts, approve loans, or transfer funds.

Identity theft is possible, but it typically requires more information and access — not just a brief interaction in a public place.

Real Risks That Actually Exist

While the viral story exaggerates the threat, some real risks do exist in public and online interactions.

These include:

OTP scams: If you read a verification code sent to your phone aloud, scammers can use it to access your accounts.

Social engineering: Scammers manipulate people using urgency, trust, or emotional pressure.

Account login traps: Being tricked into entering your credentials on someone else’s device.

Deepfake impersonation: Using publicly available videos and audio from social media to mimic individuals.

These threats are real — but they typically require more direct cooperation or information from the victim.

Why Fear-Based Messages Spread Quickly

Messages like this often go viral because they use powerful psychological triggers, including:

- Urgency (“30 minutes can ruin your life”)

- Fear (“They don’t want your money. They want your identity.”)

- Authority tone

- Instructions to forward immediately

Fear spreads faster than facts, especially when new technologies like AI are involved.

People are more likely to share warnings that feel urgent, even if they are exaggerated.

Practical Safety Tips Without Panic

Instead of reacting with fear, focus on simple, effective digital safety practices:

- Never share your OTP codes with anyone

- Avoid logging into personal accounts on unfamiliar devices

- Be cautious when handling strangers’ phones

- Disconnect suspicious or unexpected video calls

- Limit sharing sensitive personal content publicly

- Enable multi-factor authentication on important accounts

These basic precautions significantly reduce your risk.

You do not need paranoia — you need awareness.

Final Verdict: Real Technology, Exaggerated Scenario

The viral “AI biometric cloning in 30 minutes” warning contains a mix of truth and exaggeration.

AI voice and face cloning technologies are real.

But the claim that scammers can instantly steal your fingerprint and drain your finances within minutes simply by handing you a phone is highly unlikely.

The real threat is not magical AI identity theft in public spaces.

The real threat is social engineering — scammers manipulating trust, urgency, and human behavior.

Closing Thoughts

Artificial intelligence is powerful, and its misuse is a legitimate concern. But misinformation and fear-based warnings can be just as harmful, causing panic and confusion.

Before sharing alarming messages, take a moment to verify the facts.

Cybersecurity is strongest when guided by knowledge, awareness, and calm decision-making — not fear.

Media Disclaimer: This report is based on internal and external research obtained through various means. The information provided is for reference purposes only, and users bear full responsibility for their reliance on it. Summitsystemsissp assumes no liability for the accuracy or consequences of using this information